サポートベクタマシン実装編; Code of Support Vector Machine

概要

この記事ではサポートベクタマシンの実行サンプル、結果、そして実装について述べる。

サポートベクタマシンの理論についてはこちら

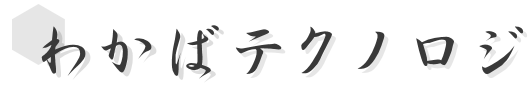

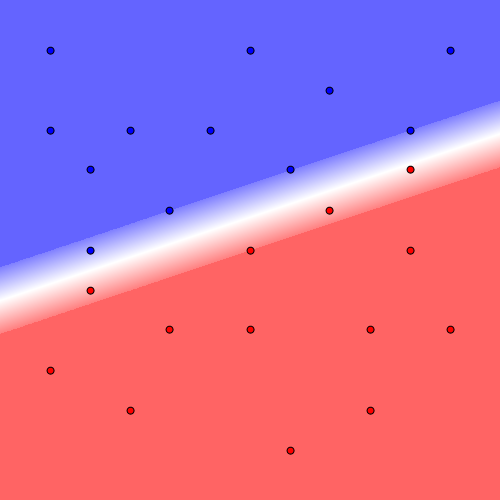

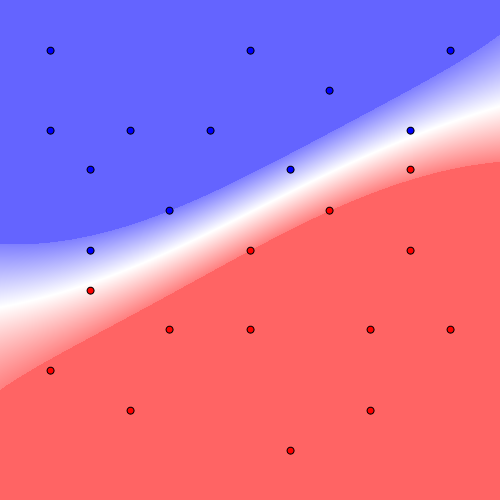

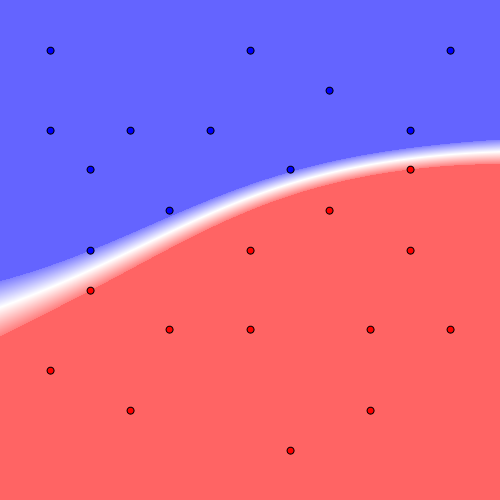

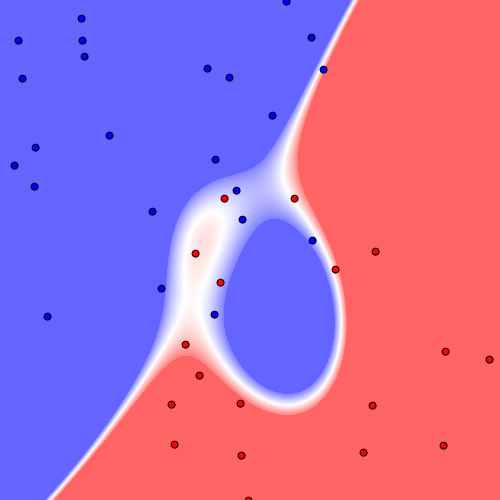

実行サンプルおよび結果では線形分離可能、線形分離不可能、ランダム配置時の3パターンを以下に示す。

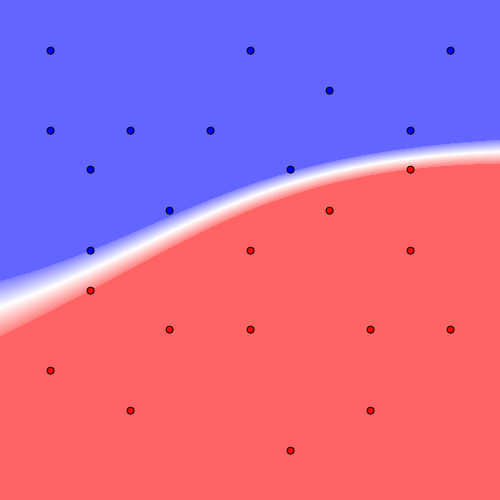

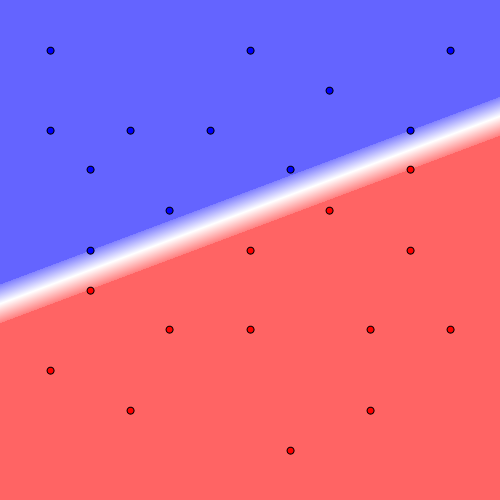

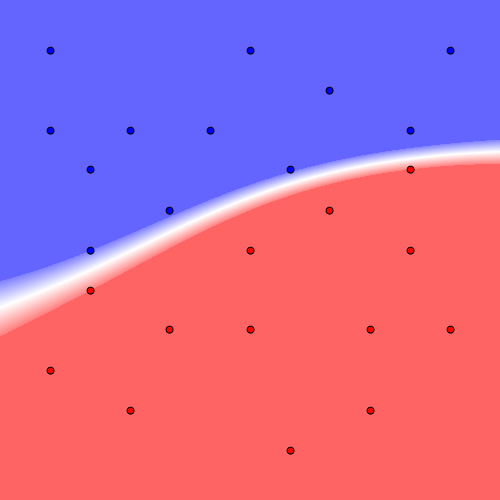

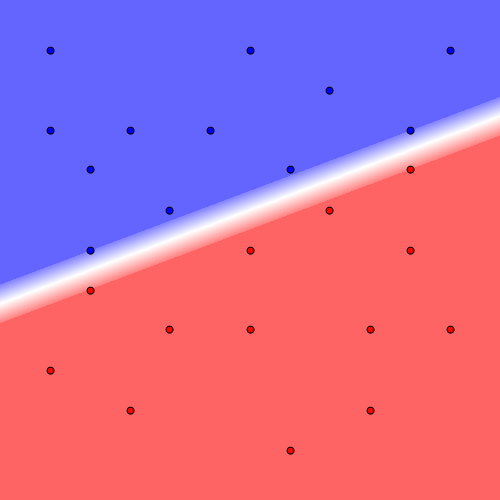

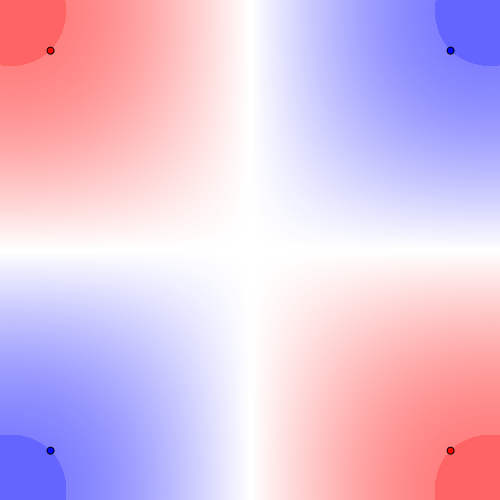

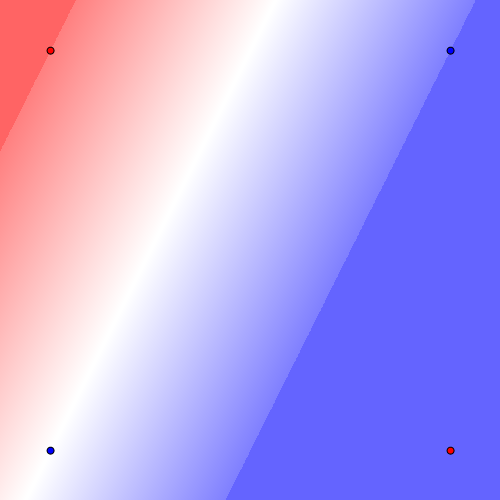

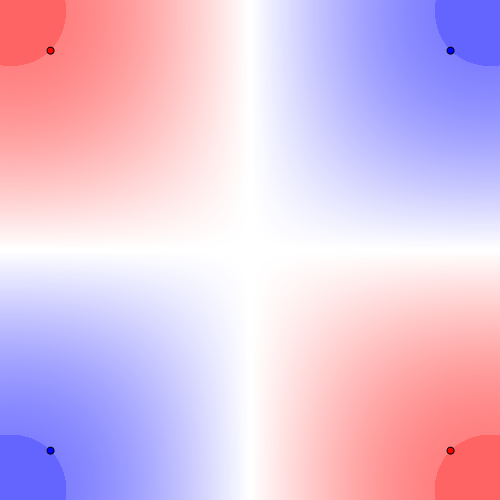

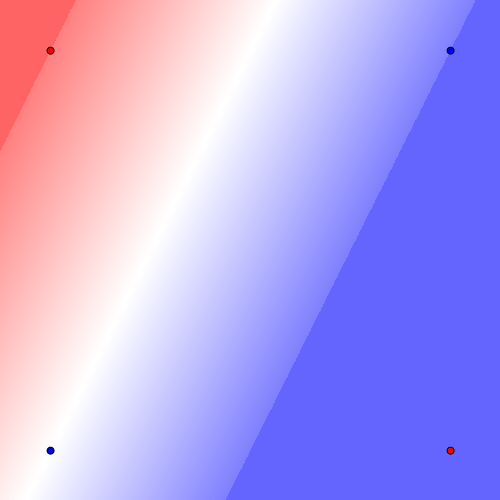

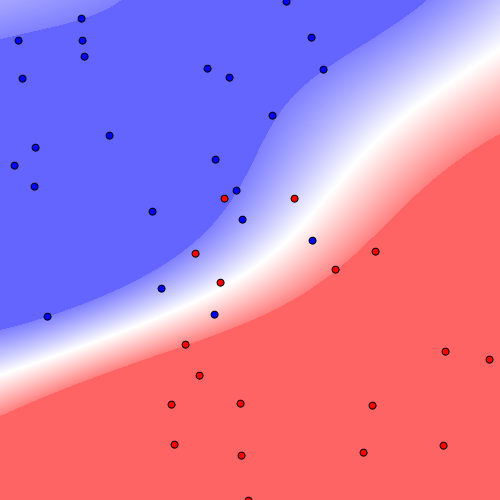

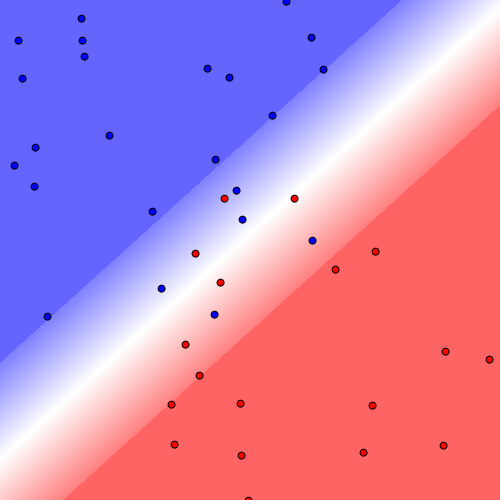

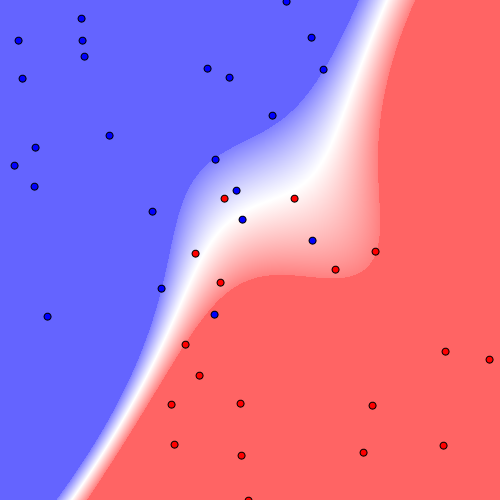

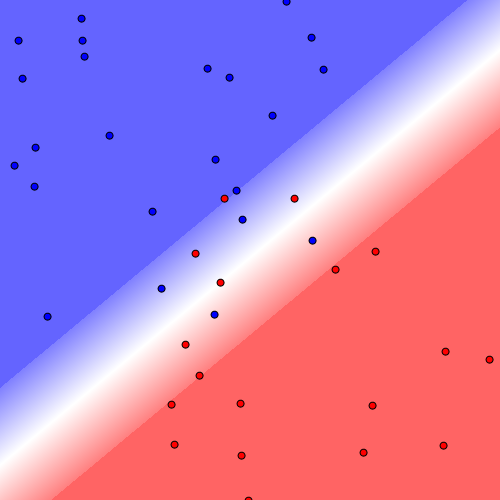

以降の画像は左が線形サポートベクタマシン、右がガウシアンサポートベクタマシンの結果になる。

Costを大きくすれば誤識別は少なくなるが、過学習が起きやすくなる。

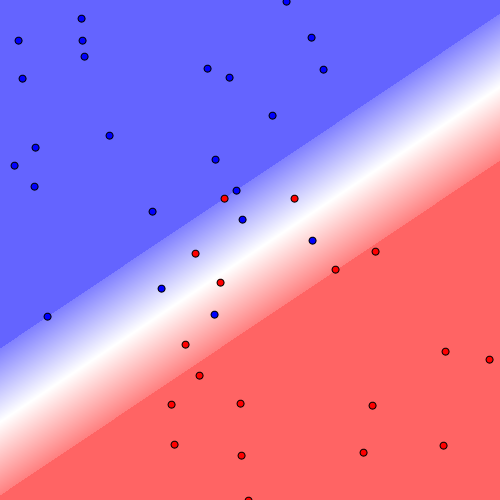

線形分離可能

const double cost = 10000;

List<Vector> positive_vectors = new List<Vector>(), negative_vectors = new List<Vector>();

positive_vectors.Add(new Vector(-1.0, -1.0));

positive_vectors.Add(new Vector( 0.0, -1.0));

positive_vectors.Add(new Vector(+1.0, -1.0));

positive_vectors.Add(new Vector(+0.4, -0.8));

positive_vectors.Add(new Vector(-1.0, -0.6));

positive_vectors.Add(new Vector(-0.6, -0.6));

positive_vectors.Add(new Vector(-0.2, -0.6));

positive_vectors.Add(new Vector(+0.8, -0.6));

positive_vectors.Add(new Vector(-0.8, -0.4));

positive_vectors.Add(new Vector(+0.2, -0.4));

positive_vectors.Add(new Vector(-0.4, -0.2));

positive_vectors.Add(new Vector(-0.8, 0.0));

negative_vectors.Add(new Vector(+0.8, -0.4));

negative_vectors.Add(new Vector(+0.4, -0.2));

negative_vectors.Add(new Vector( 0.0, 0.0));

negative_vectors.Add(new Vector(+0.8, 0.0));

negative_vectors.Add(new Vector(-0.8, +0.2));

negative_vectors.Add(new Vector(-0.4, +0.4));

negative_vectors.Add(new Vector( 0.0, +0.4));

negative_vectors.Add(new Vector(+0.6, +0.4));

negative_vectors.Add(new Vector(+1.0, +0.4));

negative_vectors.Add(new Vector(-1.0, +0.6));

negative_vectors.Add(new Vector(-0.6, +0.8));

negative_vectors.Add(new Vector(+0.6, +0.8));

negative_vectors.Add(new Vector(+0.2, +1.0));

SupportVectorMachine linear_svm = new LinearSupportVectorMachine(cost: cost);

linear_svm.Learn(2, positive_vectors, negative_vectors);

Plot(linear_svm, positive_vectors, negative_vectors, $"s1_linear_cost{cost}_svm.png");

SupportVectorMachine gaussian_svm = new GaussianSupportVectorMachine(cost: cost, sigma:1);

gaussian_svm.Learn(2, positive_vectors, negative_vectors);

Plot(gaussian_svm, positive_vectors, negative_vectors, $"s1_gaussian_cost{cost}_svm.png");

Cost=100

Cost=1000

Cost=10000

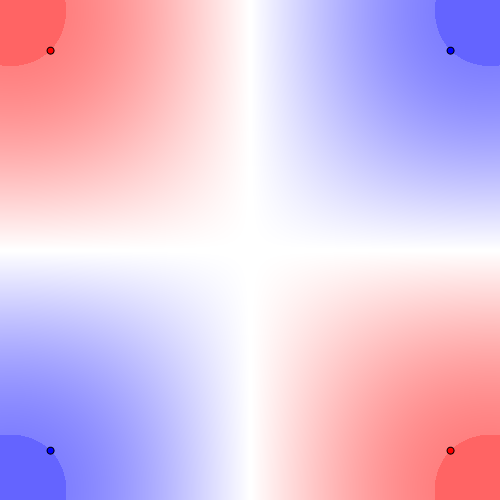

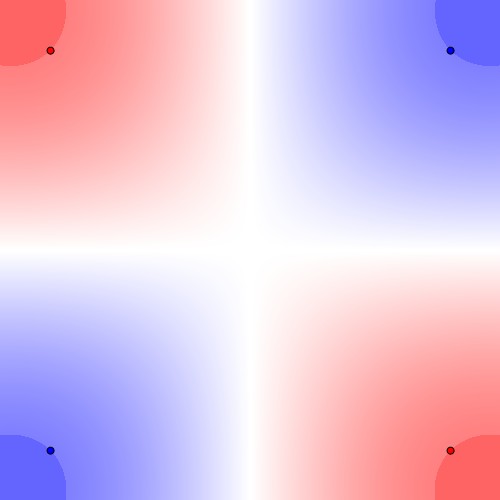

線形分離不可能

const double cost = 10000;

List<Vector> positive_vectors = new List<Vector>(), negative_vectors = new List<Vector>();

positive_vectors.Add(new Vector(-1, +1));

positive_vectors.Add(new Vector(+1, -1));

negative_vectors.Add(new Vector(+1, +1));

negative_vectors.Add(new Vector(-1, -1));

SupportVectorMachine linear_svm = new LinearSupportVectorMachine(cost: cost);

linear_svm.Learn(2, positive_vectors, negative_vectors);

Plot(linear_svm, positive_vectors, negative_vectors, $"s2_linear_cost{cost}_svm.png");

SupportVectorMachine gaussian_svm = new GaussianSupportVectorMachine(cost: cost, sigma:1);

gaussian_svm.Learn(2, positive_vectors, negative_vectors);

Plot(gaussian_svm, positive_vectors, negative_vectors, $"s2_gaussian_cost{cost}_svm.png");

Cost=100

Cost=1000

Cost=10000

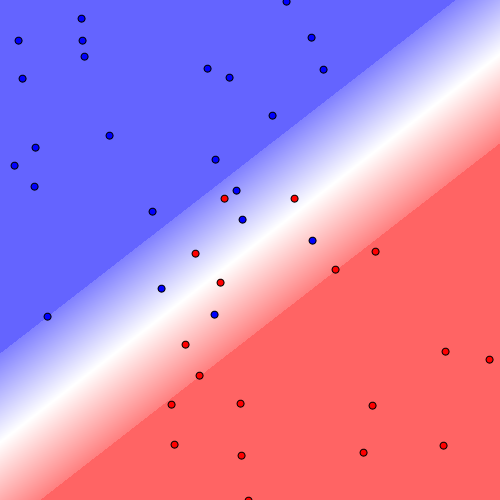

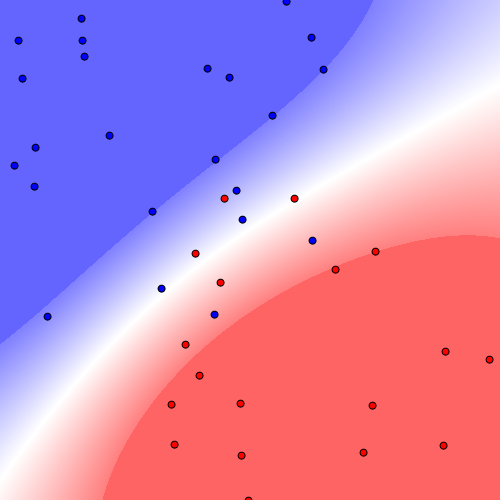

ランダム配置

Random random = new Random(10);

const double cost = 10000;

List<Vector> positive_vectors = new List<Vector>(), negative_vectors = new List<Vector>();

Vector positive_center = new Vector(-0.5, -0.5), negative_center = new Vector(+0.5, +0.5);

for(int i = 0; i < 25; i++) {

positive_vectors.Add(positive_center + new Vector(1.8 * random.NextDouble() - 0.9, 1.8 * random.NextDouble() - 0.9));

}

for(int i = 0; i < 20; i++) {

negative_vectors.Add(negative_center + new Vector(1.8 * random.NextDouble() - 0.9, 1.8 * random.NextDouble() - 0.9));

}

SupportVectorMachine linear_svm = new LinearSupportVectorMachine(cost: cost);

linear_svm.Learn(2, positive_vectors, negative_vectors);

Plot(linear_svm, positive_vectors, negative_vectors, $"s3_linear_cost{cost}_svm.png");

SupportVectorMachine gaussian_svm = new GaussianSupportVectorMachine(cost: cost, sigma:1);

gaussian_svm.Learn(2, positive_vectors, negative_vectors);

Plot(gaussian_svm, positive_vectors, negative_vectors, $"s3_gaussian_cost{cost}_svm.png");

Cost=100

Cost=1000

Cost=10000

サンプルコードPlot関数

static void Plot(SupportVectorMachine svm, List<Vector> positive_vectors, List<Vector> negative_vectors, string filepath) {

Bitmap image = new Bitmap(500, 500);

Func<double, Color> color_func = (s) => {

int d = (int)((Math.Abs(s) >= 1) ? 100 : (256 - 128 * Math.Abs(s)));

if(Math.Abs(s) < 1.0e-4) {

return Color.FromArgb(255, 255, 255);

}

return (s < 0) ? Color.FromArgb(255, d, d) : Color.FromArgb(d, d, 255);

};

for(int x, y = 0; y < image.Height; y++) {

for(x = 0; x < image.Width; x++) {

double vx = (x - image.Width / 2) / (image.Width * 0.4);

double vy = (y - image.Height / 2) / (image.Height * 0.4);

double s = svm.ClassifyRaw(new Vector(vx, vy));

image.SetPixel(x, y, color_func(s));

}

}

using(Graphics g = Graphics.FromImage(image)) {

g.SmoothingMode = System.Drawing.Drawing2D.SmoothingMode.HighQuality;

foreach(var positive_vector in positive_vectors) {

int x = (int)((4 * positive_vector.X + 5) / 10 * image.Width);

int y = (int)((4 * positive_vector.Y + 5) / 10 * image.Height);

g.DrawEllipse(new Pen(Color.Black, 2), new Rectangle(new Point(x - 3, y - 3), new Size(6, 6)));

g.FillEllipse(new SolidBrush(Color.Blue), new Rectangle(new Point(x - 3, y - 3), new Size(6, 6)));

}

foreach(var negative_vector in negative_vectors) {

int x = (int)((4 * negative_vector.X + 5) / 10 * image.Width);

int y = (int)((4 * negative_vector.Y + 5) / 10 * image.Height);

g.DrawEllipse(new Pen(Color.Black, 2), new Rectangle(new Point(x - 3, y - 3), new Size(6, 6)));

g.FillEllipse(new SolidBrush(Color.Red), new Rectangle(new Point(x - 3, y - 3), new Size(6, 6)));

}

}

image.Save(filepath, ImageFormat.Png);

}

サポートベクタマシンのコード

namespace Clustering {

/// <summary>線形サポートベクタマシン</summary>

public class LinearSupportVectorMachine : SupportVectorMachine {

/// <summary>コンストラクタ</summary>

/// <param name="cost">誤識別に対するペナルティの大きさ</param>

public LinearSupportVectorMachine(double cost) : base(cost){ }

/// <summary>カーネル関数</summary>

protected override double Kernel(Vector vector1, Vector vector2) {

double sum = 0;

for(int i = 0; i < VectorDim; i++) {

sum += vector1[i] * vector2[i];

}

return sum;

}

}

/// <summary>ガウシアンサポートベクタマシン</summary>

public class GaussianSupportVectorMachine : SupportVectorMachine {

private double sigma, gamma;

/// <param name="cost">誤識別に対するペナルティの大きさ</param>

/// <param name="sigma">ガウシアン関数の尺度パラメータ</param>

public GaussianSupportVectorMachine(double cost, double sigma) : base(cost){

this.Sigma = sigma;

}

/// <summary>ガウシアン関数の尺度パラメータ</summary>

public double Sigma {

get {

return sigma;

}

protected set {

sigma = value;

gamma = 1 / (2 * sigma * sigma);

}

}

/// <summary>カーネル関数</summary>

protected override double Kernel(Vector vector1, Vector vector2) {

double norm = 0;

for(int i = 0; i < vector1.Dim; i++) {

double d = vector1[i] - vector2[i];

norm += d * d;

}

return Math.Exp(-gamma * norm);

}

}

/// <summary>サポートベクタマシン</summary>

public abstract class SupportVectorMachine : IClusteringMethod {

protected class WeightVector {

public Vector Vector { get; set; }

public double Weight { get; set; }

}

double bias, cost;

List<WeightVector> support_vectors;

/// <summary>コンストラクタ</summary>

/// <param name="cost">誤識別に対するペナルティの大きさ</param>

public SupportVectorMachine(double cost) {

if(!(cost > 0)) {

throw new ArgumentException(nameof(cost));

}

Initialize();

this.cost = cost;

}

/// <summary>データクラス数</summary>

/// <remarks>SVMは常に2</remarks>

public int GroupCount => 2;

/// <summary>ベクトルの次元数</summary>

public int VectorDim {

get; private set;

}

/// <summary>単一サンプルを分類</summary>

/// <param name="vector">サンプルベクタ</param>

public int Classify(Vector vector) {

return ClassifyRaw(vector) > 0 ? +1 : ClassifyRaw(vector) < 0 ? -1 : 0;

}

/// <summary>単一サンプルを分類</summary>

/// <param name="vector">サンプルベクタ</param>

/// <param name="threshold">弁別しきい値</param>

public int Classify(Vector vector, double threshold) {

return ClassifyRaw(vector) > threshold ? +1 : ClassifyRaw(vector) < -threshold ? -1 : 0;

}

/// <summary>複数サンプルを分類</summary>

/// <param name="vectors">サンプルベクタ集合</param>

public IEnumerable<int> Classify(IEnumerable<Vector> vectors) {

return vectors.Select((vector) => Classify(vector));

}

/// <summary>複数サンプルを分類</summary>

/// <param name="vectors">サンプルベクタ集合</param>

/// <param name="threshold">弁別しきい値</param>

public IEnumerable<int> Classify(IEnumerable<Vector> vectors, double threshold) {

return vectors.Select((vector) => Classify(vector, threshold));

}

/// <summary>単一サンプルの識別値</summary>

public double ClassifyRaw(Vector vector) {

if(vector == null || vector.Dim != VectorDim) {

throw new ArgumentException();

}

double s = -bias;

foreach(var support_vector in support_vectors) {

s += support_vector.Weight * Kernel(vector, support_vector.Vector);

}

return s;

}

/// <summary>複数サンプルの識別値</summary>

public IEnumerable<double> ClassifyRaw(IEnumerable<Vector> vectors) {

return vectors.Select((vector) => ClassifyRaw(vector));

}

/// <summary>学習</summary>

/// <param name="vector_dim">サンプルベクタ次元数</param>

/// <param name="vectors_groups">データクラスごとのサンプルベクタ集合</param>

/// <remarks>サンプルベクタ集合は正例と負例の2つ</remarks>

public void Learn(int vector_dim, params List<Vector>[] vectors_groups) {

Initialize();

ValidateSample(vector_dim, vectors_groups);

// サポートベクターとなる最小のベクトル重み

double epsilon = 1.0e-3;

// ベクトルの次元数

VectorDim = vector_dim;

// ベクトル

List<Vector> positive_vectors = vectors_groups[0];

List<Vector> negative_vectors = vectors_groups[1];

List<Vector> inputs = new List<Vector>();

inputs.AddRange(positive_vectors);

inputs.AddRange(negative_vectors);

// ラベル

double[] outputs = (new double[inputs.Count]).Select((_, i) => i < positive_vectors.Count ? +1.0 : -1.0).ToArray();

// 逐次最小問題最適化法実行

var smo = new SequentialMinimalOptimization(inputs.ToArray(), outputs, cost, Kernel);

smo.Optimize();

bias = smo.Bias;

double[] vector_weight = smo.VectorWeight;

//サポートベクターの格納

for(int i = 0; i < vector_weight.Length; i++) {

if(vector_weight[i] > epsilon) {

var wvec = new WeightVector { Weight = vector_weight[i] * outputs[i], Vector = (Vector)inputs[i].Clone() };

support_vectors.Add(wvec);

}

}

}

/// <summary>カーネル関数</summary>

protected abstract double Kernel(Vector vector1, Vector vector2);

/// <summary>初期化</summary>

public void Initialize() {

bias = 0;

support_vectors = new List<WeightVector>();

}

/// <summary>サンプルの正当性を検証</summary>

private void ValidateSample(int vector_dim, List<Vector>[] vectors_groups) {

if(vector_dim < 1) {

throw new ArgumentException(nameof(vector_dim));

}

if(vectors_groups == null) {

throw new ArgumentNullException(nameof(vectors_groups));

}

if(vectors_groups.Length != GroupCount) {

throw new ArgumentException(nameof(vectors_groups));

}

foreach(var vectors in vectors_groups) {

if(vectors.Count < 1) {

throw new ArgumentException(nameof(vectors_groups));

}

foreach(var vector in vectors) {

if(vector.Dim != vector_dim) {

throw new ArgumentException(nameof(vectors_groups));

}

}

}

}

}

}

逐次最小問題最適化法のコード

namespace Clustering {

/// <summary>逐次最小問題最適化法</summary>

public class SequentialMinimalOptimization {

private int vectors;

private Vector[] inputs;

private double[] outputs;

private double bias, cost, tolerance, epsilon;

private double[] a, errors;

private Random random;

private Func<Vector, Vector, double> kernel;

/// <summary>コンストラクタ</summary>

public SequentialMinimalOptimization(Vector[] inputs, double[] outputs, double cost, Func<Vector, Vector, double> kernel) {

this.vectors = inputs.Length;

this.inputs = inputs;

this.outputs = outputs;

this.cost = cost;

this.kernel = kernel;

}

/// <summary>ベクトル重み</summary>

public double[] VectorWeight => a;

/// <summary>バイアス</summary>

public double Bias => bias;

/// <summary>最適化シークエンスを実行</summary>

public void Optimize() {

errors = new double[vectors];

random = new Random(0);

a = new double[vectors];

bias = 0;

tolerance = 1e-3;

epsilon = 1e-3;

int changed_count = 0;

bool examine_all = true;

while(changed_count > 0 || examine_all) {

changed_count = 0;

if(examine_all) {

for(int i = 0; i < vectors; i++)

changed_count += ExamineExample(i);

}

else {

for(int i = 0; i < vectors; i++)

if(a[i] != 0 && a[i] != cost)

changed_count += ExamineExample(i);

}

if(examine_all)

examine_all = false;

else if(changed_count == 0)

examine_all = true;

}

}

/// <summary>i2と同時に最適化するi1を探索し2パラメータの最適化を実行</summary>

private int ExamineExample(int i2) {

int i1;

Vector v2 = inputs[i2];

double y2 = outputs[i2];

double a2 = a[i2];

double e2 = (a2 > 0 && a2 < cost) ? errors[i2] : ComputeF(v2) - y2;

double r2 = y2 * e2;

if(!(r2 < -tolerance && a2 < cost) && !(r2 > tolerance && a2 > 0))

return 0;

// 誤差値の差分を最大化するi1の探索

i1 = i2;

double maxerr = 0;

for(int i = 0; i < inputs.Length; i++) {

if(i == i2)

continue;

if(a[i] > 0 && a[i] < cost) {

double e1 = errors[i];

double err = Math.Abs(e2 - e1);

if(err > maxerr) {

maxerr = err;

i1 = i;

}

}

}

if(i1 != i2 && TakeStep(i1, i2))

return 1;

// i1をランダムに決定

int start = random.Next(inputs.Length);

for(i1 = start; i1 < inputs.Length; i1++) {

if(a[i1] > 0 && a[i1] < cost)

if(TakeStep(i1, i2))

return 1;

}

for(i1 = 0; i1 < start; i1++) {

if(a[i1] > 0 && a[i1] < cost)

if(TakeStep(i1, i2))

return 1;

}

start = random.Next(inputs.Length);

for(i1 = start; i1 < inputs.Length; i1++) {

if(TakeStep(i1, i2))

return 1;

}

for(i1 = 0; i1 < start; i1++) {

if(TakeStep(i1, i2))

return 1;

}

return 0;

}

/// <summary>2パラメータの最適化を実行</summary>

private bool TakeStep(int i1, int i2) {

if(i1 == i2)

return false;

Vector v1 = inputs[i1], v2 = inputs[i2];

double a1_old = a[i1], a2_old = a[i2];

double y1 = outputs[i1], y2 = outputs[i2];

double e1 = (a1_old > 0 && a1_old < cost) ? errors[i1] : ComputeF(v1) - y1;

double e2 = (a2_old > 0 && a2_old < cost) ? errors[i2] : ComputeF(v2) - y2;

double s = y1 * y2;

// a1,a2を最適化

double clip_l, clip_h;

if(y1 != y2) {

clip_l = Math.Max(0, a2_old - a1_old);

clip_h = Math.Min(cost, cost + a2_old - a1_old);

}

else {

clip_l = Math.Max(0, a2_old + a1_old - cost);

clip_h = Math.Min(cost, a2_old + a1_old);

}

if(clip_l >= clip_h)

return false;

double k11, k22, k12, eta;

k11 = kernel(v1, v1);

k12 = kernel(v1, v2);

k22 = kernel(v2, v2);

eta = k11 + k22 - 2 * k12;

double a1_new, a2_new;

if(eta > 0) {

a2_new = a2_old - y2 * (e2 - e1) / eta;

if(a2_new < clip_l)

a2_new = clip_l;

else if(a2_new > clip_h)

a2_new = clip_h;

}

else {

double l1 = a1_old + s * (a2_old - clip_l);

double h1 = a1_old + s * (a2_old - clip_h);

double f1 = y1 * (e1 + bias) - a1_old * k11 - s * a2_old * k12;

double f2 = y2 * (e2 + bias) - a2_old * k22 - s * a1_old * k12;

double obj_l = -0.5 * l1 * l1 * k11 - 0.5 * clip_l * clip_l * k22 - s * clip_l * l1 * k12 - l1 * f1 - clip_l * f2;

double obj_h = -0.5 * h1 * h1 * k11 - 0.5 * clip_h * clip_h * k22 - s * clip_h * h1 * k12 - h1 * f1 - clip_h * f2;

if(obj_l > obj_h + epsilon)

a2_new = clip_l;

else if(obj_l < obj_h - epsilon)

a2_new = clip_h;

else

a2_new = a2_old;

}

if(Math.Abs(a2_new - a2_old) < epsilon * (a2_new + a2_old + epsilon))

return false;

a1_new = a1_old + s * (a2_old - a2_new);

if(a1_new < 0) {

a2_new += s * a1_new;

a1_new = 0;

}

else if(a1_new > cost) {

double d = a1_new - cost;

a2_new += s * d;

a1_new = cost;

}

// バイアスを更新

double b1 = 0, b2 = 0;

double new_bias = 0, delta_bias;

if(a1_new > 0 && a1_new < cost) {

new_bias = e1 + y1 * (a1_new - a1_old) * k11 + y2 * (a2_new - a2_old) * k12 + bias;

}

else {

if(a2_new > 0 && a2_new < cost) {

new_bias = e2 + y1 * (a1_new - a1_old) * k12 + y2 * (a2_new - a2_old) * k22 + bias;

}

else {

b1 = e1 + y1 * (a1_new - a1_old) * k11 + y2 * (a2_new - a2_old) * k12 + bias;

b2 = e2 + y1 * (a1_new - a1_old) * k12 + y2 * (a2_new - a2_old) * k22 + bias;

new_bias = (b1 + b2) / 2;

}

}

delta_bias = new_bias - bias;

bias = new_bias;

// 誤差値を更新

double t1 = y1 * (a1_new - a1_old);

double t2 = y2 * (a2_new - a2_old);

for(int i = 0; i < inputs.Length; i++) {

if(0 < a[i] && a[i] < cost) {

Vector vi = inputs[i];

errors[i] += t1 * kernel(v1, vi) + t2 * kernel(v2, vi) - delta_bias;

}

}

errors[i1] = errors[i2] = 0;

// a1,a2を格納

a[i1] = a1_new;

a[i2] = a2_new;

return true;

}

/// <summary>F値を計算</summary>

private double ComputeF(Vector v) {

double sum = -bias;

for(int i = 0; i < inputs.Length; i++) {

if(a[i] > 0)

sum += a[i] * outputs[i] * kernel(inputs[i], v);

}

return sum;

}

}

}

クラスタリング手法インタフェースクラス

サポートベクタマシン理論編